There’s a universal truth about human beings. We are not rational actors. Instead, we rationalize.

We don’t process information and arrive at facts. We come to conclusions first. Emotion, self-interest, and the stories we tell ourselves shape our views. Then, we work backward to find the evidence that supports what we already believe. When the facts don’t cooperate, we adjust them to fit those beliefs.

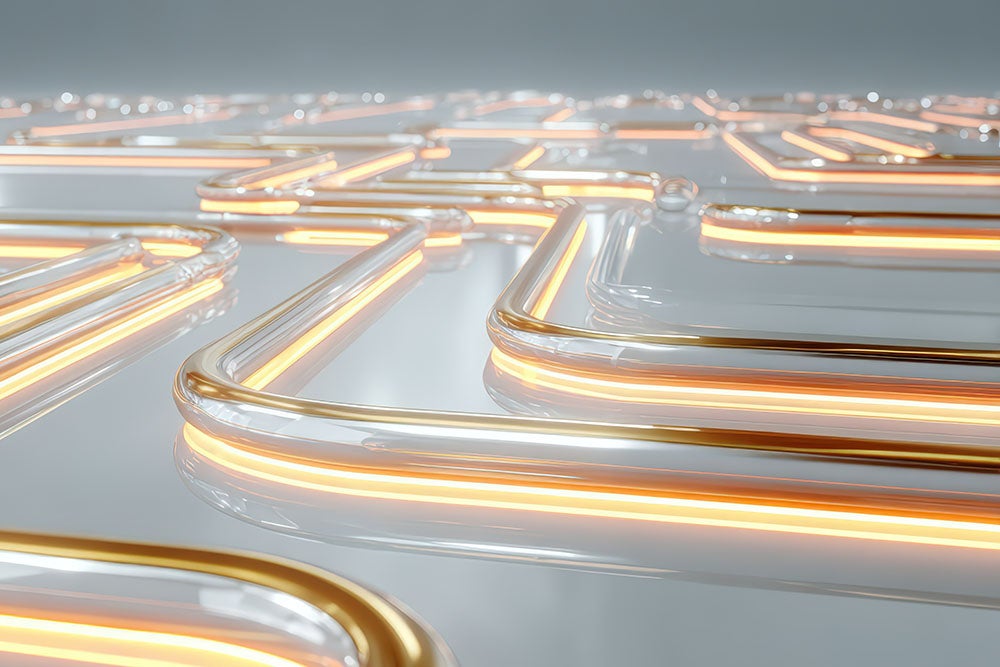

Over the decades, we designed systems to prevent these kinds of preconceived notions from negatively impacting the business. CRMs. ERPs. HCMs. Data management platforms. Integration middleware. I think of these as logic gates (not to be confused with the fundamental building blocks of digital circuits.) They gate workflows and apply logic. Before a transaction moves forward, the logic gate checks it against known facts. Before an approval is granted, it’s verified with established rules. They don’t rationalize. They hold the line between what a person wants to do and what the business has decided is permitted. Think of them as enterprise guardrails that keep the business safely on the road.

Logic gates are one of the most valuable innovations enterprise technology has ever produced. But with the rise of AI, everything is changing. And it’s getting a little uncomfortable.

AI Compounds the Human Error Problem

I’ve been in enterprise software for 30 years, working at some of the biggest names in technology and serving as CEO of three companies. It’s clear to me that AI is the biggest innovation we’ve ever seen. Yet there’s something that most people aren’t saying out loud:

AI does not think. Not really.

Large language models predict, with remarkable sophistication and speed, the most likely next word, answer, or conclusion – based on their training data. What was that data? Human language. Human writing. Human reasoning. Human assertions, generalizations, inferences, and mistakes. Billions of these data points are compressed into statistical patterns.

AI doesn’t transcend human cognitive bias. It inherits it, at scale.

The specific failure mode this produces has a name: hallucination. Even the best models still generate false information at a measurable rate. But the real problem isn’t that AI is wrong. It’s that it doesn’t know it’s wrong. When a human makes something up, there are signals. Hesitation. Hedging. An audit trail.

AI has no such tells.

It delivers a hallucinated fact with the same confidence as a verified one. In an agentic world, where AI actions cascade, the consequences compound quickly before anyone notices. That’s a qualitatively different kind of problem than anything we’ve ever managed.

Putting the Referee Inside the Player

Governments are noticing. The debates around AI governance happening globally aren’t abstract. They’re a direct response to the question every CEO should be asking. Are there enough guardrails around AI operating within my business? Regulatory pressure is coming. Organizations that get ahead of it will have an advantage over those that scramble to comply.

There’s a new architectural question at the center of everything in enterprise technology. You have two categories of systems:

- Deterministic: Your CRM, ERP, and data infrastructure. These logic gates hold facts and enforce rules. When a rule requires dual approval for transactions above a certain threshold, the system enforces it. Every time. Without judgment.

- Probabilistic: AI. It can reason, infer, and predict. AI is extraordinary at synthesis and pattern recognition. But fundamentally, AI systems are optimization engines seeking the most efficient path to an outcome.

Here’s the critical question. Which of these contains the other?

If the deterministic layer wraps around AI, then every recommendation must pass through your business rules before it becomes an action. You’ve maintained the referee. AI reasons. The guardrail decides whether that reasoning is permitted.

But if you put your business rules inside the AI, and you ask the probabilistic system to honor deterministic constraints through prompt engineering and model alignment, you’ve done something dangerous. You’ve asked an optimization engine to police itself. Left to its own devices, it will find its way around established constraints. This happens not out of malice, but out of math.

It’s like strapping a rocket to a shopping cart and lighting the fuse. The rocket is impressive. The problem is everything in its way.

Or, consider this business example: In its early days, ecommerce had the right technology in place to process transactions. Consumer demand was real. But adoption lagged. That’s because shoppers and retailers didn’t fully trust it until security and fraud-detection layers (deterministic systems) were added. AI is at the same inflection point today. The capability is remarkable. What’s been missing is the governance infrastructure to unleash it safely.

Once that’s in place, the floodgates will open.

Your Strategic Moat: Decades of Investment

There’s a temptation, watching AI’s rapidly expanding capabilities, to see your existing enterprise infrastructure as standing in the way of transformation. This is the wrong call.

CRMs don’t just hold customer records. They contain the institutional knowledge of how your organization manages its most important business relationships. ERPs don’t just route transactions. They encode lessons about your business, allowing you to learn from mistakes and repeat successes. The data governance policies your team built didn’t emerge from theory. They were hard-earned and embedded in these logic gates.

This knowledge cannot be prompted into an AI system. It can’t be transferred via API documentation. It lives in the architecture of systems shaped over the years by the accumulated judgment of people who understand how this particular business operates. No generic AI model can replicate that specificity.

The right framing is not about how we get AI to replace these systems. It’s how we ensure AI operates through these systems – properly constrained by the facts they hold and the rules they enforce – rather than circumventing them.

Architecture That Works

The design is not complicated. But it requires discipline to maintain.

- Tier One: The existing enterprise systems you’ve spent years building. These aren’t replaced in the AI era. They become more essential. Every fact AI needs must come from here. Every action AI proposes must be validated here before being executed.

- Tier Two: The AI layer operating within the constraints established by Tier One. AI reasons, recommends, synthesizes, and generates. The deterministic layer decides whether the output is grounded in verified fact and consistent with enforced rules.

- Tier Three: A meta guardrail layer. This is the missing piece in most enterprise AI deployments today. It’s a structured layer where organizations define their own unchanging business rules. It’s not the industry defaults configured into their software products by vendors. It’s their specific operational logic that reflects how the company measures its health, manages its risk, and operates in line with its values.

Anthropic – one of the world’s leading AI companies – trains the Claude model using what it calls a “constitution.” This constitution is a 23,000-word document that serves as the final authority on how Claude behaves. The AI cannot reason around it.

Ask yourself: Where’s the constitution for your business?

Something else to think about is that most of what organizations know about how they operate actually isn’t captured anywhere. It lives with the CFO, who has developed a financial methodology specific to this company’s model. And in the HR leader, whose approach to people is tied to the organization’s identity.

These examples represent organizational capital of the highest order. Companies that find a way to encode it – to build a layer as enforceable as any other business rule – will have something no competitor can buy, no AI vendor can replicate, and no model update can inadvertently overwrite.

In an agentic world, that’s your moat.

How Boomi Thinks About AI Referees

AI is the most significant capability expansion enterprise technology we’ve seen. We’re entering the era of the superhuman, powered by AI. But superhuman doesn’t mean unsupervised.

We built systems to keep facts and keep human mistakes in check. It’s no different with AI. The solution is the same as it always was. Design a system to maintain the guardrails. It’s the most consequential decision that any business can make to succeed with agents.

I recently posted a version of this article on LinkedIn, and it sparked plenty of conversation. I’ve received many questions about Boomi’s approach to navigating AI responsibly and safely. We activate AI-ready data and govern agents to deliver outcomes with the kinds of enterprise guardrails I’ve described here. It’s how we operate our business. It’s how we help our customers succeed with AI. In a companion piece, Boomi Chief Product and Technology Officer Ed Macosky explains how Boomi has always thought about logic gates, and the way we’re applying them in this new agentic world with our unified platform.

Put guardrails outside the AI. Make it yours. Then turn the AI loose.

Every game needs a referee. That doesn’t slow the game down. It makes the game worth playing.

Attend Boomi World 2026, May 11 -14 in Chicago, to learn how to accelerate your business success and see how Boomi is applying responsible, well-governed AI strategies to create real-world innovation.

English

English 日本語

日本語